Last Week In Claude Code #2 — Skill-Creator, Ultrathink, /voice, Ollama Subagents

Test Your Skills, Talk to Claude, and Run Free Parallel Agents

Hello, thank you for being part of our Claude Code Masterclass community.

We are now 21k+ members (still overwhelmed by your support, your pledges, and good vibes) — it’s unbelievable, and I’m grateful for every one of you!

I am working day and night to deliver the best practical Claude Code education material.

This is the #2 issue in the Last Week In Claude Code series — Claude Code skill-creator, /ultrathink, /voice, and Ollama subagents

Last week brought some major Claude Code updates: a framework to test if your agent skills work, Ultrathink made a comeback, native voice input, and a way to run subagents without burning tokens.

What Happened Last Week In Claude Code?

#1) Skill-Creator Plugin — Finally Test If Your Agent Skills Work

Agent skills are notorious for fooling you into believing they work — but sometimes they fail, and the next day they work fine.

Anthropic released the Skill-Creator plugin — a testing framework to measure and refine your agent skills.

What It Does

Evals — Define test prompts and expectations, then check if outputs match

Benchmarks — Track pass rate, elapsed time, and token usage

A/B Comparisons — Blind testing between skill versions

Description Optimizer — Improves skill triggering accuracy

Two Types of Skills

Capability Uplift Skills — Help Claude do something the base model can’t do consistently (PDF, DOCX, PPTX creation)

Encoded Preference Skills — Document your team’s workflows so Claude follows your process every time

Real Results

Anthropic tested the PDF skill on non-fillable forms:

Test With Skill Without Skill Pass Rate 5/5 (100%) 2/5 (40%)

The skill clearly adds value where the base model fails.

I ran the same test on PDFs and found similar results, which was eye-opening:

Installing the Plugin

# Add the marketplace

/plugin marketplace add anthropics/skills

# Install skill-creator

/plugin install skill-creator@anthropic-agent-skills

I tested the PDF skill on a job application form and documented the entire process — what worked, what broke, and how the plugin measures it.

You can learn more here — Claude Code Skill-Creator Measures If Your Agent Skills Work

#2) Ultrathink Is Back — Version 2.1.68

A fresh Claude Code update reintroduced Ultrathink, changing how you manage thinking effort.

What Changed in 2.1.68

Opus 4.6 now defaults to medium effort for Max and Team subscribers

Opus 4 and 4.1 are gone from the first-party API — users are moved to Opus 4.6

Ultrathink is back after being removed in an earlier release

High effort on every prompt was overkill. Renaming a variable doesn’t need deep thinking.

Medium effort handles 90% of daily tasks. But that other 10% — architecture decisions, complex debugging, security-critical code — needs more.

That’s where Ultrathink makes sense.

Effort Levels

Method Effort Level Duration Default (2.1.68) Medium Permanent /model → High High Permanent ultrathink High One turn only

How Ultrathink Works

Type

ultrathinkbefore your prompt. Claude switches to high effort for that one turn, then returns to your default.

It only works if your current effort is medium or low. If you’re already on high, it does nothing.

My Test

I gave Claude Code the same debugging task: Node.js API with a race condition.

Medium effort: Identified the cause, suggested a fix.

Ultrathink: Walked through the entire request lifecycle, found two separate issues (the race condition + a token expiry edge case), and outlined a testing strategy.

From my findings, Medium gives you the answer. Ultrathink gives you the answer, plus things you didn’t ask about.

You can learn more here — Claude Code Ultrathink Is Back In New Update (I Just Tested It)

#3) Native /voice Mode — Stop Typing, Start Talking

Claude Code introduced voice mode last week. You can now use the /voice command.

You no longer need MCP workarounds or paying for OpenAI Whisper API calls. It’s built in.

How It Works

Type /voice to toggle it on.

Push-to-Talk: Hold Space, speak, release. Your words stream in real-time at your cursor.

Hybrid Prompts: Type half a prompt, hold Space to voice the messy middle, keep typing.

Fix the auth middleware in [hold Space] — the token validation

is failing silently when the expiry timestamp is malformed,

it should throw a proper 401 [release] — and add a test for it.

What You Get

No extra cost — Voice transcription doesn’t count against rate limits

Cross-platform — Works on macOS, Linux, and Windows

No cloud dependency — Runs locally inside your Claude Code session

Roll Out

It’s rolling out to ~5% of users first.

Check your Claude Code welcome screen — if you’re in the rollout, you’ll see a note confirming voice mode is available.

If not, as in my case above, the rollout is expanding over the coming weeks. I’ll run a full build test to show you how it works.

You can learn more here — Claude Code Voice Is Here /voice (You Can Now Talk & Stop Typing)

#4) Ollama Subagents & Web Search — Run Parallel Agents at No Cost

You can now run Claude Code subagents and web search using Ollama cloud models — without burning your Anthropic tokens.

One Command to Launch

ollama launch claude --model minimax-m2.5:cloud

Works with any model on Ollama’s cloud.

Subagents in Action

I built an expense tracker using three parallel subagents:

frontend-ui-expert — HTML, CSS, vanilla JS (11.4k tokens)

Backend agent — FastAPI routes and models (11.0k tokens)

Project structure agent — Files and README (10.8k tokens)

All three ran simultaneously. Project completed in minutes.

Then I spun up three more subagents to audit the code. They found real issues:

CORS vulnerability (allow_credentials=True with wildcard origins)

Deprecated datetime. utcnow

Float for monetary values instead of Decimal

Web Search Works Too

Subagents can research topics in parallel:

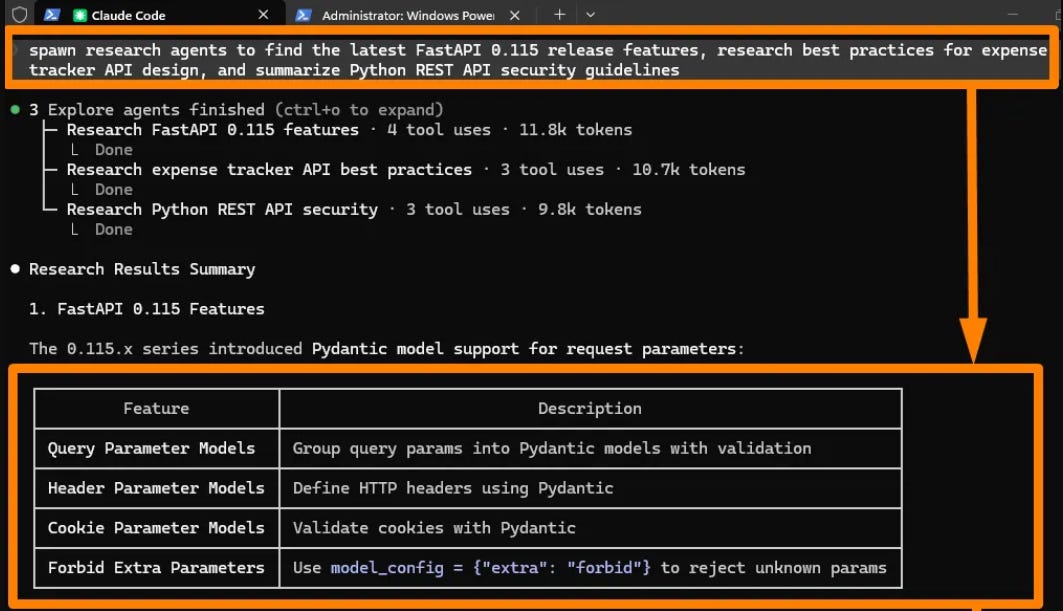

spawn research agents to find the latest FastAPI 0.115 release features,

research best practices for expense tracker API design, and summarize

Python REST API security guidelines

Three research agents returned structured tables with actionable recommendations.

Recommended Models

Not all Ollama models support subagents and web search. These three work:

minimax-m2.5:cloudglm-5:cloudkimi-k2.5:cloud

This setup works best for prototypes and learning. For production work or sensitive codebases, stick with the paid API.

But for experimenting without watching your bill — or when Anthropic has an outage — this is the move.

You can learn more here — How I’m Running Claude Code Subagents & Web Search (At No Cost)

Finally, this newsletter belongs to all of us. If there’s something that can make it better or something you don’t like, please let me know.

See you in the next issue of Last Week In Claude Code.

Claude Code Masterclass

Let’s Build It Together

— Joe Njenga

Thank you for putting this together. Very helpful to see new Anthropic features discussed in one place.

Do you have a beginners set up, someone who has no experience but really eager to learn this Claude Code?